The Stack I Wish I Found Sooner

I used to think building AI agents was mostly about picking the right model.

Then I actually built a few.

🚀 High-Paying Tech Roles Are Open Right Now.

Apply Quickly — The Faster You Move, The Better Your Chance To Get Selected.

👉 Apply & Secure Your Job

And realized the hard part is not the LLM. The hard part is everything around it:

- tool calling

- memory

- retries

- structured outputs

- async workflows

- scraping

- observability

- and making the agent stop hallucinating itself into chaos

After a lot of trial, broken demos, and agents that confidently did the wrong thing, I found a stack that finally made my agents feel stable and usable.

Here are the 8 Python libraries that made the difference.

1. LangChain

The agent framework that makes tool use practical

LangChain is not perfect, but it solves a real problem: wiring together prompts, tools, memory, and chains without reinventing everything.

What I use it for:

- Tool calling with function schemas

- Retrieval pipelines (RAG)

- Prompt templates

- Conversation memory

- Agent routing

If you are building agents that need to call tools and retrieve knowledge, LangChain saves you weeks.

Example: simple tool + agent setup

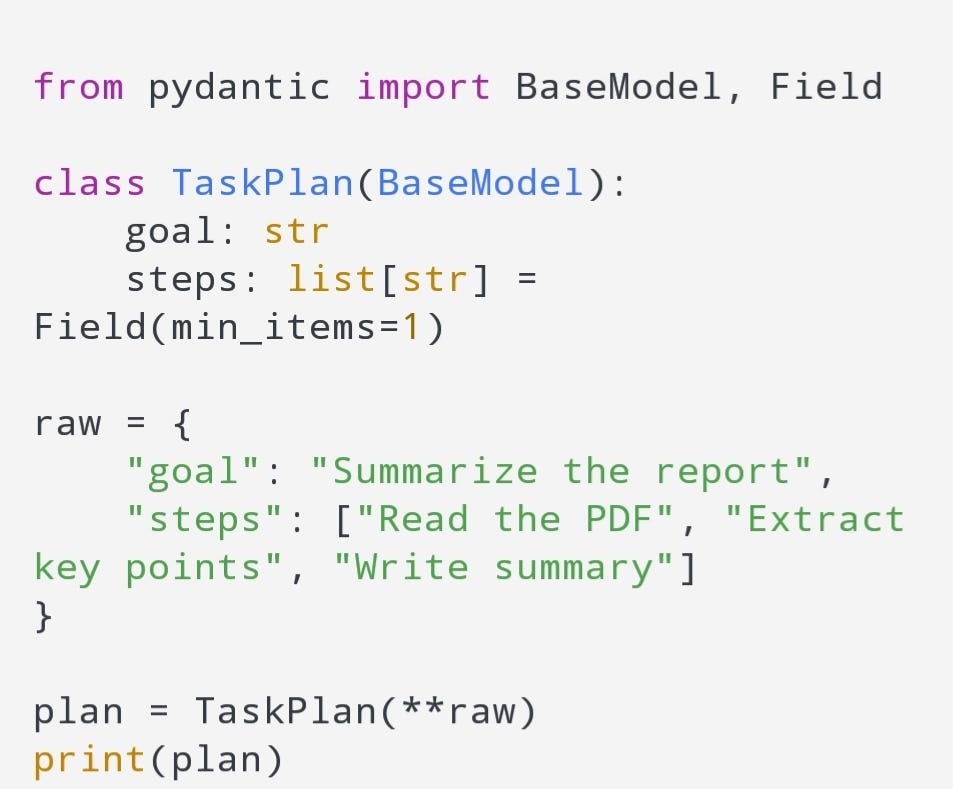

2. Pydantic

The library that stops agents from returning garbage

If your agent returns JSON and you trust it without validation, you will suffer.

Pydantic is the difference between:

- a toy agent

and - a production-grade agent

It forces structured output, validates fields, and catches failures early.

Example: validate an agent response

In real agent pipelines, Pydantic prevents 50 percent of weird crashes.

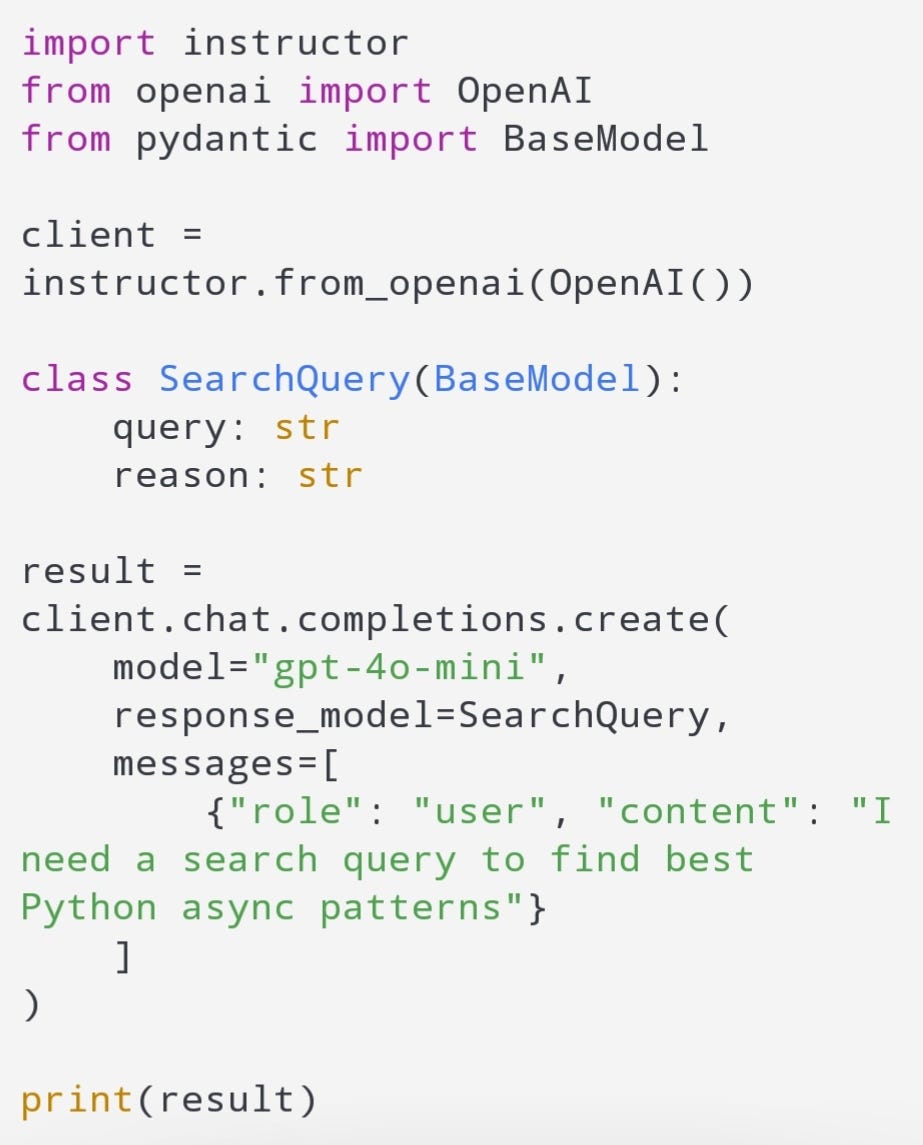

3. Instructor

The easiest way to get reliable structured LLM output

Instructor is one of those libraries that feels like cheating.

Instead of begging the model for valid JSON, you give it a Pydantic schema and it returns the schema.

This dramatically improves agent reliability.

Example: force structured output

If you build agents that make decisions, Instructor is a game changer.

4. Tenacity

Retries that make your agent stop failing randomly

Agents fail constantly for boring reasons:

- timeouts

- rate limits

- flaky APIs

- tool failures

- network issues

Tenacity gives you clean retry logic without messy loops.

Example: retry tool calls

If you do not use retries, your agent will feel unreliable even if your logic is perfect.

5. httpx

The HTTP client that makes agents fast and async-ready

Agents are basically tool callers.

And tools often mean HTTP.

httpx is clean, modern, async-friendly, and way better than raw requests when your agent needs to run multiple calls.

Example: async API call

If your agent does 5–10 tool calls per task, async httpx is a huge win.

6. BeautifulSoup (bs4)

The scraping library agents still rely on

Yes, even in 2026, agents scrape.

If your agent needs to:

- read documentation

- parse blogs

- extract product info

- collect public data

BeautifulSoup remains the simplest reliable solution.

Example: extract page title

It is not fancy, but it works. And for agents, working beats fancy.

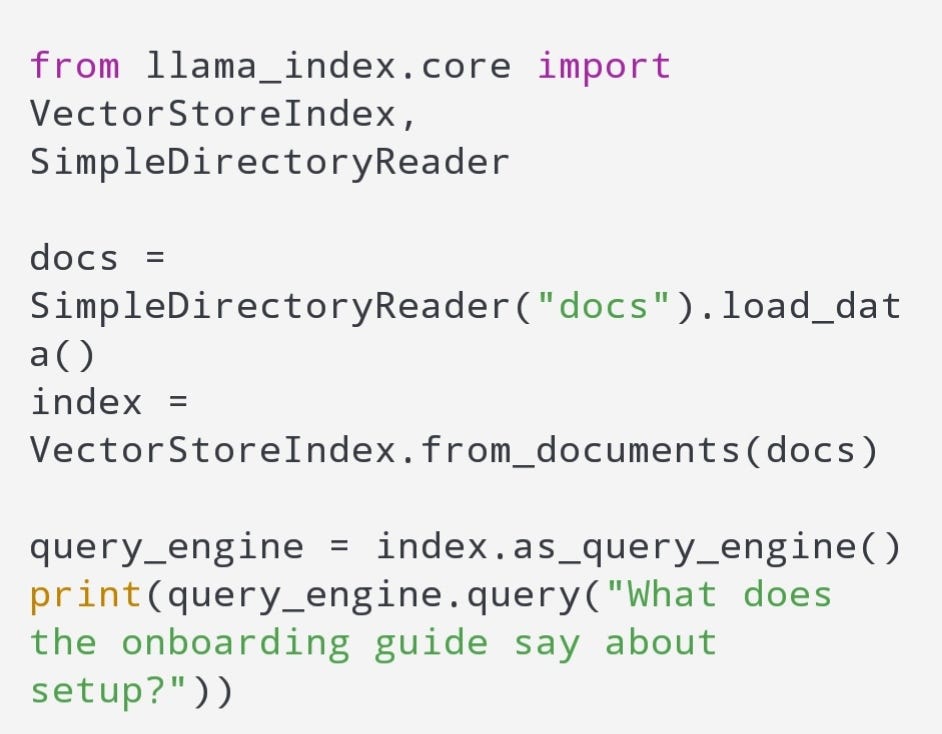

7. LlamaIndex

The RAG engine that makes retrieval actually usable

If you are building agents that need memory and knowledge, you need retrieval.

LlamaIndex is one of the best libraries for:

- indexing documents

- chunking strategies

- embeddings + vector search

- building RAG pipelines

- query routing

It is cleaner than most people expect.

Example: simple doc indexing

This is where agents stop feeling like chatbots and start feeling like assistants.

8. Langfuse

The observability tool that reveals why your agent is dumb

If you do not log traces, you will waste weeks debugging.

Agent bugs are invisible unless you can see:

- what prompt was used

- what tools were called

- what the model responded

- how long each step took

- where the failure happened

Langfuse gives you that visibility.

It is basically the difference between:

- guessing why your agent failed

and - knowing exactly why it failed

Once you start tracing agents, you never go back.

The Real Agent Stack (The One That Finally Worked)

If I had to rebuild from scratch, I would use this exact setup:

Core agent framework

- LangChain (or your own lightweight wrapper)

Structured output

- Pydantic + Instructor

Retrieval

- LlamaIndex

Tool calling reliability

- Tenacity + httpx

Web parsing

- BeautifulSoup

Debugging and observability

- Langfuse

This stack does not just build agents.

It makes them stable.

The Hard Truth About AI Agents

Most AI agents fail for boring reasons:

- tool calls return unexpected data

- output is not validated

- no retries

- bad retrieval

- no tracing

- async bugs

- prompts are untestable

These libraries fix the boring parts.

And once the boring parts are solved, the agent suddenly feels smart.

Writer : Mansheh